Is It Complicated?!?!?: 5 Questions For Thinking About Your Relationship with GenAI

A Talk That Didn't Happen

This here is a talk that I prepped for but because of incliment weather, I wasn’t able to deliver. I was asked to do a talk for a student-AI group that was having their first gathering as they kicked off their organization at Salem State University (my alma mater for undergrad).

I was asked to craft something that would be short (15 minutes) for students to help them think about careers, work, and life with AI. And, well, those are not as easy a thing to do when it comes to students and the allotted time. But I like a challenge to get me thinking and this gave me a moment to reflect on what would be global and somewhat concise ways of thinking about the core of my advice for figuring out AI.

I decided to structure the talk around the questions that I felt would be important for students to be thinking about as they approached working with AI—the things they should be holding space for as they considered where and how these tools fit into their work.

So, you know the drill! You can check out the Slides and the Resource document; the full text is below.

Thank you for having me and I’m honored to be at this first gathering–as an alumnus and as someone who is also trying to find his way through this all.

I want to start by saying something important up front: I’ve got questions, not answers. I can’t tell you how to use AI or whether you should or shouldn’t be using it. These are deeply individual considerations that we have to think about and navigate.

So what am I here to talk about then? Well, this talk is about our relationship with AI and, really, all technology. What it feels like to work alongside a tool that looks like it’s thinking, sounds like it’s thinking, but isn’t actually thinking at all.

And that’s where things get complicated. Because this technology is everywhere right now. It’s being pushed at us in classrooms, workplaces, workflows. And we don’t really have good models yet for how to think about what that does to us as humans.

So instead of answers, I want to offer questions. Questions I’ve been using myself, albeit imperfectly, to slow down, to notice, and to stay human while using these tools.

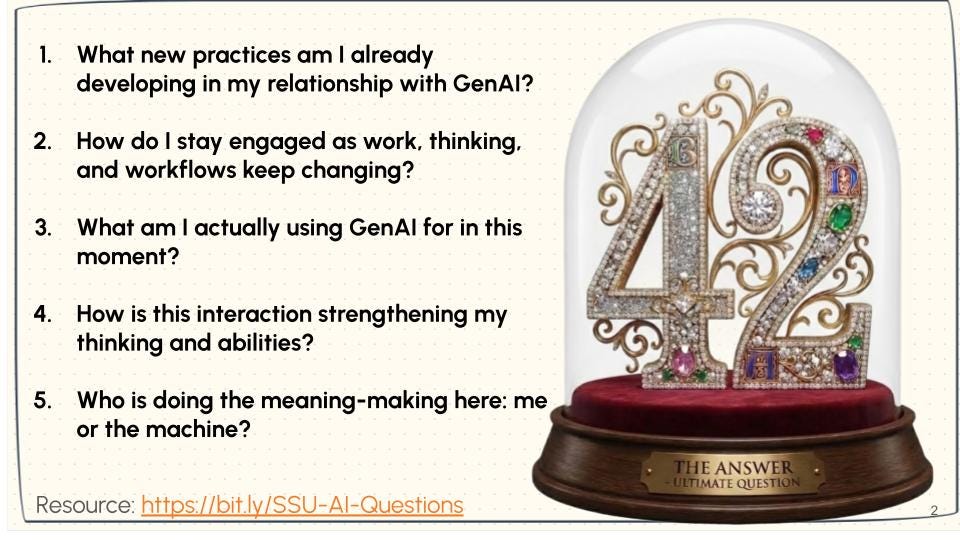

What new practices am I already developing in my relationship with GenAI?

If you know, you know (Second slide—above). But if you don’t know, 42 is the answer to life, the universe, and everything. A long-lasting joke from the series, Hitchhiker’s Guide to the Galaxy by Douglas Adams.

In the book, these beings build a massive system–an AI, if you will, to calculate the answer to life, the universe, and everything. And all it spits out is this number, 42. And that sends the plot to figure out, well then, what’s the question?

I appreciate this, and I think this is what’s important about our work and relationship with AI: we have to constantly think about what are the questions we’re asking about this technology that can provide answers that we don’t fully understand how it gets to those answers.

These questions I offer shouldn’t have stable answers. You can’t answer them once and be done. And you definitely can’t answer them by asking ChatGPT. I know. I tried. It only confused me.

These questions are practices: questions you come back to again and again as the technology changes, as your work changes, and as you change. Some questions may feel very practical. Almost obvious.

Others might feel a little uncomfortable. Even inconvenient. Or like they slow things down at a moment when everything is telling you to speed up. That discomfort is actually part of what I want us to pay attention to.

I’m not asking you to stop using AI. I’m asking you to notice how you’re using it and what that relationship is practicing in you. More than anything else I have to offer, this is the thing that will help YOU answer this going forward.

Let’s start concretely with practice. Because whether we’ve thought about it or not,

all of us are already developing practices with these tools.

The way we prompt. The way we verify answers…or don’t. The way we accept outputs, revise them, or paste them somewhere else. The way we reach for AI when we’re rushed, overwhelmed, or just don’t care that much about the task in front of us.

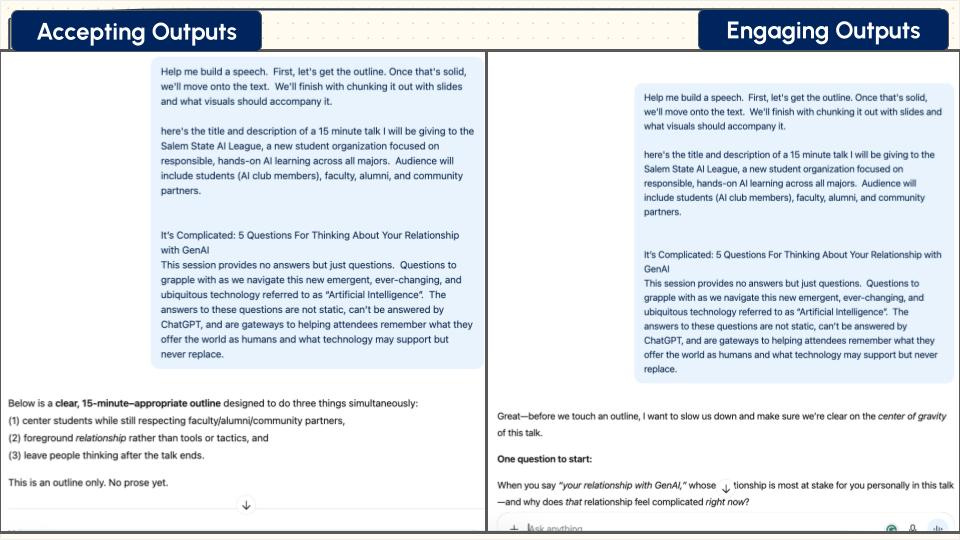

None of that is neutral. I want to show what this is like in practice. I’m going to do a bit of “showing what’s behind the curtain here and how I used GenAI compared with how people are often using it.

The screenshots on the left are practices that involve almost no intentionality with AI leading and the user following. On the right, is the more intentional approach.

So on the left, I went through ChatGPT to just build this talk. I gave it some context, but really, let it make and build all the things. On the right is where I had nearly the same prompt but used a CustomGPT with specific instructions not to give me answers but to keep asking me questions. I include that in the resource document. I also share both chatlogs (Chatlog of the AI talk without questioning; Chatlog of the AI talk with questioning), the slides from both, and the text from both. I want you to see the difference.

Same technology. Very different relationship. At this stage, I don’t want you to judge either one. Just notice: what kinds of practices do you already see yourself in? As you’re looking at this, ask yourself silently: Where do I already do something like this? Where don’t I? Where should I?

How do I stay engaged as work, thinking, and workflows keep changing?

This next question builds directly on the first, and I know it is hard for all of us. I certainly struggle with it. After noticing the practices we’re developing, the next challenge is staying engaged as everything keeps changing.

We have to grapple with the fact that the work is changing, the workflows are changing, and the expectations around speed and output are changing.

And that can be exhausting. I’m deeply tempted with AI when I feel behind or rushed…or just overwhelmed. In all honesty, when I don’t really care that much about the task in front of me, but I know it still needs to get done, I really want to lean on AI–even when it’s important. It’s so very easy to slide into autopilot at these moments. It feels efficient but also alleviates that pressure. Yet, it often comes at the cost of engagement.

So when you’re looking at these screenshots, I want you to notice something slightly different than before.

Not what the AI is producing but where is human attention.

Where am I still thinking? Where am I still making decisions? In the case of this talk, what is going to land better; what is going feel more authentic and valuable?

Let’s pause here for just a moment.

We’ve been talking about practices and workflows: what we’re doing with these tools, and how we’re adapting. The next set of questions shifts the focus. Instead of asking what we’re doing with AI, we start asking what these practices might be doing to us. Things might get a little more personal. And maybe a little less comfortable.

So to alleviate any forthcoming tension, I give you, without any AI whatsoever, 3 photos of my cats, because whenever I have to face something uncomfortable, these are what keep me grounded.

And I probably just regained at least 3 people’s attention with that, but possibly lost 4.

What am I actually using GenAI for in this moment?

This is the question where I want you to recognize yourself. Not in an abstract way but in a very specific, situational way.

What am I actually using GenAI for right now?

Am I using it to explore an idea? To push my thinking? To generate options, I can work against? Or am I using it to avoid something hard? To get unstuck quickly? To produce something that looks finished without really engaging with it?

None of those answers are inherently good or bad. What matters is whether we’re aware of the choice we’re making in that moment.

Just notice the shape of your interactions. How many turns are there? Who’s doing more of the work? And where do the decisions seem to be happening?

This is the question I keep coming back to myself. Especially when I’m tired and rushed. Because naming how we’re using the tools gives us back a bit more agency. And that matters more than we might expect.

How is this interaction strengthening my thinking and abilities?

This question is about where friction shows up.

AI doesn’t think. It mimics thinking. And it does that really well. Enough so that we come up with terms like “hallucination” that lead us to give it more human qualities than it has.

So the question becomes: is this interaction actually pushing my thinking forward, or is it quietly stepping around it? If we are to be professionals in the world, we do have to learn to work with these tools, but that doesn’t mean we sacrifice our own analytical abilities.

There’s a belief baked into these tools that struggle means inefficiency. That using AI to bypass this is “productive”. That if something feels hard, we should remove the resistance.

But we know from learning science and from lived experience that some friction is essential. It’s how learning sticks in our minds and bodies. It’s how confidence gets built. It’s how we actually change our minds. You didn’t achieve something amazing in your life–whatever that was–because it was easy.

Every success story that we respect comes with a narrative about friction, and so we have to be vigilant when tools remove friction and consider where, when, and how that might be beneficial or problematic.

I can use AI to bypass friction or I can use it to stay inside the struggle. To ask better questions, to surface gaps, to work against ideas. And that friction actually produces growth.

So as you look at these interactions, ask yourself: Where is the resistance happening?

And just as importantly: What happens when it’s gone?

Who is doing the meaning-making here: me or the machine?

This is the question I want to linger with. Because meaning-making isn’t neutral. It’s not just about producing something that looks right.

Whoever does the meaning-making decides:

What counts as good work?

What gets trusted?

What gets carried forward?

AI has no stake in any of that for our individual lives. It has no empathic interest in meaning-making in our lives. It has no body. No consequences. No responsibility.

So when we outsource meaning-making, it doesn’t disappear. It just lands somewhere else. And that comes back to impact us, whether we’ve thought about it or not.

This question should unsettle you just enough to notice where your responsibility actually is. If we cede our agency, responsibility, and meaning-making to AI, then what do we as humans actually get to say is ours? What do we lose as learners, professionals, and just people in a society that is already fraying significantly as we look around?

I don’t want to end on discomfort. I want to end on agency. Because the point of these questions isn’t to make you afraid of AI. It’s to remind you that you still have power here. We have to grapple with these questions with every technology.

As college students, you get the luck–good or bad–to think about them deeply as you’re emerging deeper into your fields. You can help your peers and your future employers and employees to think differently in this moment, but that means you also have to ask yourself these questions.

You can pause before prompting.

You can reject an output.

You can tell the tool to ask you questions instead of giving you answers.

You can decide what counts as your work.

You can even decide at times to step away.

None of that requires permission. It just requires noticing. AI can support us. It can accelerate things. It can help us see possibilities. But it can’t replace what you bring as a human: your judgment, your care, your capacity to make meaning.

That part still belongs to you. And the more you practice holding onto it, the more it shows up: not just in your work, but in how you move through the world.

As I said, I don’t bring any answers but I hope that my questions help you realize that it’s not answers that you need.

Thank you!

The Update Space

Upcoming Sightings & Shenanigans

Continuous Improvement Summit, February 2026

EDUCAUSE Online Program: Teaching with AI. Virtual. Facilitating sessions: ongoing

Recently Recorded Panels, Talks, & Publications

Online Learning in the Second Half with John Nash and Jason Johnston: EP 39 - The Higher Ed AI Solution: Good Pedagogy (January 2026)

The Peer Review Podcast with Sarah Bunin Benor and Mira Sucharov: Authentic Assessment: Co-Creating AI Policies with Students (December 2025)

David Bachman interviewed me on his Substack, Entropy Bonus (November 2025)

The AI Diatribe Podcast with Jason Low (November): Episode 17: Can Universities Keep Pace With AI?

The Opposite of Cheating Podcast with Dr. Tricia Bertram Gallant (October 2025): Season 2, Episode 31.

The Learning Stack Podcast with Thomas Thompson (August 2025). “(i)nnovations, AI, Pirates, and Access”.

Intentional Teaching Podcast with Derek Bruff (August 2025). Episode 73: Study Hall with Lance Eaton, Michelle D. Miller, and David Nelson.

Dissertation: Elbow Patches To Eye Patches: A Phenomenographic Study Of Scholarly Practices, Research Literature Access, And Academic Piracy

AI Syllabi Policy Repository: 200+ policies (always looking for more- submit your AI syllabus policy here)

Finally, if you are doing interesting things with AI in the teaching and learning space, particularly for higher education, consider being interviewed for this Substack or even contributing. Complete this form, and I’ll get back to you soon!

We periodically host small-group workshops and leadership sessions for higher ed teams. You can learn more about our current offerings here.

AI+Edu=Simplified by Lance Eaton is licensed under Attribution-ShareAlike 4.0 International

It's first time I used it